John McCormick writes: news of a couple of surveys was e-mailed to the Salut! Sunderland team recently. The first e-mail was more or less a repeat of something we had a little while ago, from a website calling itself Dirtyplayers.co.uk. The second was new to me. We’re happy to share their findings but if I am honest, I’m not impressed by either survey and feel one, and only one, could have value.

The dirtyplayers.org wesbite originally reported, among other things, that Gareth Barry had accumulated the most ‘Dirty Points’ in the history of the Premier League and that Lee Cattermole was the dirtiest player based on number of appearances. The e-mail we got this week followed this up by telling us that Gareth Barry had picked up his 121st yellow card in the Spurs – West Brom game, and that he’d played 131 games for Everton, who had recently been branded the dirtiest team in Premier League history.

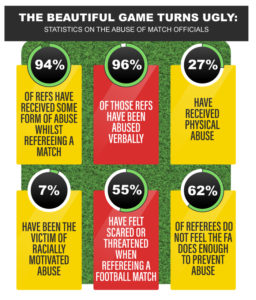

The second, from ticketgum via journalistic.org reported that (up to) 94 per cent of refs surveyed by ticketgum reported being abused during games …

So what do you think of all that?

In the case of the dirty players report, I have to say “so what?” Such reports will provide ammunition in the pub, pre-match and at work but when will anyone who supports any particular club agree with the opposition fans that “your player is dirtier than ours” – Catts v Tiote, anyone?

And I think any half-sentient fan will have enough nous to challenge the basis of the Dirtyplayers findings. From what I’ve read, a red card was awarded 25 points and a yellow card five, with totals for players being added up.

What I’d like to know is why a red card is worth five yellows when two yellows in a game are worth only one red. And is a straight red for a nasty over-the-top leg breaker worth exactly the same as the one that should result from removing a shirt after scoring then kicking the ball away after someone else’s foul? In fact, as I said in response to Colin Randall’s first post here:

- There’s dirty as in tackles that are designed to take a player out of a game, or at least reduce effectiveness.

- There’s dirty as in sly underhand tricks and tactics which will get other players a red or yellow card, such as the use of whispered racist language or falling over and/or clutching the face when hit in the chest

- There’s dirty as in elbows when the ref’s not looking

- There’s dirty as in shirt pulling in the box at corners

- There’s dirty as in the so called professional foul – “taking one for the team”

- Then there’s not being good or fast enough, so players get hit instead of the ball. That’s not dirty, just the way things are when your opponents are so elite.

And which of these get the cards?

I’m sure you could come up with criticisms of your own but I don’t need any more. In my opinion the metric used above falls some way short of being a valid measure of dirtiness. In the words of my late lamented friend John Bowers, “it’s not worth a carrot”.

The second report, the ticketgum survey, is much more serious. So much so that I wish ticketgum had done it properly, or at least made the limitations of their findings more obvious.

Ticketgum surveyed 300 referees. From their results they made a number of claims, including that one above, as you can see in their graphic:

Now, I’ve written about abuse of refs, on this site, myself. It’s bad news and something must be done. I have no doubt that refs are abused, and that many leave the game because of it. Nevertheless, I have concerns about the ticketgum survey, which could have made a useful contribution to the debate.

The Telegraph published an interesting article on referees in 2009 which said that there were over 50,000 qualified referees in the country. I haven’t been able to find a more up to date figure so that’s what I’ll go with but please note the lack of more recent data is a serious weakness in my criticism (and can you see what I’m doing here?). With 50,000 referees to survey 300 is a very small sample. It’s so small that the possibility that the results are way out cannot be ignored. Ticketgum’s claims could be nothing more than rubbish.

Mathematicians get round such possibilities by using confidence levels (if they did the survey again how confident are they they’d get the same results) and margins of error (plusses and minuses on either side of the quoted figure) to indicate the potential for variation.

There’s also the question of how the survey was undertaken. According to their press release, Ticketgum.com spoke to almost 300 referees, from various levels, about their treatment during a match.

How did they select them? Were the referees rung up at random, from all around the country? Or were they invited to ring up themselves? Or was some other method chosen, such as sending an interviewer to a referees’ meeting at county level? Were they all asked the same questions? Were terms described accurately? There is a host of possibilities which could have affected the nature of the referees’ responses.

I’d want ticketgum to publish their confidence levels, margins of error and methodology before I gave these results any serious consideration. If they had done so, then even with a sample size of 0.6 per cent, and with a large margin for error, they might have been able to report credibly that they had investigated the issue of referee abuse and that their results suggested the scope of a problem that we all know exists and which needs serious investigation, not to mention action, by the Football Association.

It wouldn’t have been too difficult to do that, would it?

** Colin Randall, editor of Salut! Sunderland, adds: I do not suppose we will win any friends among the researchers concerned beyond the extent to which they may welcome any mention, however critical, of their findings.

John McCormick, our associate editor, is something of an authority on the use of statistics so his voice deserves to be heard when he feels they have been obtained or interpreted with less rigour than he would apply.

It goes without saying that we would willingly publish any reasoned – and reasonable – response, again however critical, to his assessment.

It’s more important to look at the subject of the article, rather than an academic analysis of the validity of the statistics which just happen to be an interest of commenters here.

Those of us who attend live games at all levels are well aware of the abuse faced by referees, to the extent that recruitment is a major problem.

Sadly, this all just reflects the lack of respect for authority pervading modern society especially by (not all) younger people.

Only stricter sanctions demonstrating the unacceptability of such behaviour are appropriate – the basic premise of the game whereby the referee’s decision is final is under threat.

After all, if everything was clinically correct, what would we have to talk about in the pub afterwards, at work the next day and in online articles such as this?

I tend to agree with you and have no real interest myself in the mathematics underpinning the articles, which were sent unsolicited to M Salut, but I would question the need to open up what is essentially a topic for discussion in a quasi scientific way unless of course it is a way of getting around the laws of libel.

I question the absence of John Terry from the list of dirty players for example. He was an expert at using any means fair or foul at defending corners and free kicks and usually got away with it.

Anyone know a good lawyer?

The abuse of referees is of course a serious matter and there is plenty of anecdotal and observational evidence to make me wonder what the objective of that particular survey was. If it is to highlight to the F.A. the need for change then perhaps it should be more rigorous, with hard evidence otherwise the conclusions drawn come as no surprise.

I have written about abuse of refs. It’s an important area. However IMO extreme claims need to be justified. What would you think if a law firm called ambulancechasers.com said 94 percent of drivers had been injured in a crash?

In the ticketgum case that figure probably has meaning but let’s be sure

M Salut is too generous. I wouldn’t say I’m an authority on anything. However, I do reserve the right to comment on, and point out shortcomings in, material presented in such a way as to suggest its conclusions are valid when they may not be.

I really shouldn’t need to but….

It’s unusual to go to any match where the ref isn’t abused! Even some parents at the Under 11 matches I used to officiate at could get worked up about nowt.